I was out at All-Con this weekend along with the whole crew from the Dallas Personal Robotics Group. Eric Chaney and I spent a lot of time trying to recruit a few more new members for our Dallas Hacker/Maker space. Also did some demos of the Noise Boundary robotics music project and shot a lot of photos. Jerry Chevalier brought his excellent Robbie and Gort movie robot replicas. The Assassination City Derby Girls were there as well some of the best Dallas cosplay people. You can check out the photos in my All-Con 2010 Album on Flickr and maybe get a quick view of them below if the Flickr embed code is working…

Tag Archives: robots

British Goverment Passes the Turing Test?

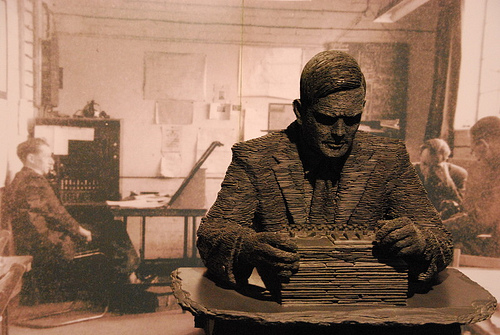

Anyone with even a passing interesting in AI or robotics knows who Alan Turing is. Sometimes referred to as the “father of AI, Turing was interested in the question of “intelligent machinery” as early 1941. he helped secure an allied victory in World War II with his cryptanalysis of the German Enigma. But among roboticists, he’s known for his work on the halting problem, the Turing machine, the Church-Turing thesis, the Turing Test, the Automatic Computing Engine, not to mention the Turing Award, which is named in his honor. Equally well known, are his persecution, legal prosecution, and forced chemical castration by the British government, whose treatment of him is believed to have lead directly to his suicide in 1954. While too late to help Turing, there is good news from the UK, where British prime minister Gordon Brown has officially apologized:

Thousands of people have come together to demand justice for Alan Turing and recognition of the appalling way he was treated. While

Turing was dealt with under the law of the time and we can’t put the clock back, his treatment was of course utterly unfair and I am pleased to have the chance to say how deeply sorry I and we all are for what happened to him. Alan and the many thousands of other gay men who were convicted as he was convicted under homophobic laws were treated terribly. Over the years millions more lived in fear of conviction.

The apology was the result of a petition with over 30,000 signers started by British free software programmer John Graham-Cumming. If you’d like to help preserve Turing’s memory, how about a contribution to Bletchley Park Trust? CC-licensed Photo of slate Alan Turing sculpture at Bletchley Park by flickr user blinkenlichts

VEX Protobot Unboxing

Here’s a quick post possibly foreshadowing a future review. The UPS guy delivered a big red box yesterday containing the new VEX Protobot Robot Kit Dual Control Starter Bundle. I was on my way out to a meeting of the Dallas Personal Robotics Group at the time, so I took the box with me and got Glenn Pipe of the DPRG to shoot a quick VEX Protobot Unboxing video. Enjoy.

There’s More Than One Way to Skin a Robot

This morning I noticed this request from Ben Goertzel on the AGI mailing list:

Does anyone know if it’s possible to purchase some sort of

artificial skin for a robot, to enable it to “feel” things that touch it? Even something fairly crude would be worthwhile…

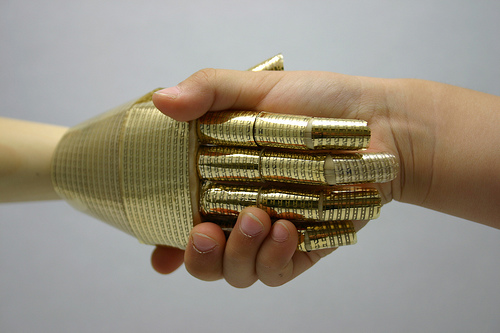

I’ve posted about a lot of robot skin research initiatives over the years at robots.net. The first thing to notice is that there are several possible applications for which something called robot skin might be needed. The first is a flexible human-like skin to cover the bodies of androids or prosthetic devices. This would involved several unique properties not usually needed in other robot skin applications: 1) the skin needs to be self healing 2) the skin needs to be flexible and soft and 3) the skin needs to radiate the same level of heat as human skin. Android skin needs one more thing that is shared with all robot skin applications; the need to sense the environment. Human skin has sensors for a number of properties including pressure, heat, vibration, and pain, some of which combine to form our perception of touch.

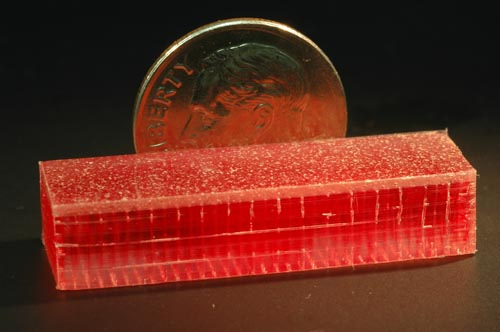

Self Healing Skin

The Microvascular Autonomic Research Initiative at the University of Illinois at Urbana-Champaign has developed a bio-inspired material with an embedded microvascular network that mimics human skin. The network supplies a healing agent to damaged surface regions, allowing the material to autonomically heal crack damage to the same location up to 7 times. For all the details, see their research paper, Self-healing materials with microvascular network (PDF format). Also check out the videos of network cracking and healing agent delivery. At present this self-healing skin exists only as research prototypes, there is no commercially available material yet.

Life-like Flexible Skin

The leading edge of research in android skin that looks and feels life-like is Hanson Robotics, in Richardson, Texas. David Hanson has developed a life-like compound called Frubber that is used on the androids developed at the company. This material has moved beyond the research stage and has been used on commercially delivered products by Hanson Robotics. To see more examples of Frubber, take a look at my photos from the Hanson Robotics lab that I shot in May 2009.

Life-like Body Temperature Skin

Current research in this area has used resistive printed circuits to heat the overlaid skin. A typical example can be found towards the article, Bleeding Edge: Flex Circuits as Robot Skin Sensors, by Robert Tarzwell and Ken Bahl of Sierra Proto Express. In the article, they described how screen-print carbon resistor tracks can be laid down on a flexible substrate using a conventional prototyping process to created heated skin suitable for androids. Of course, the first question that comes to mind is how much of a power drain this method would be for an android that has to operate on a portable power supply. The primary demand for this particular skin feature seems to be in the sex doll market. Real Doll (NSFW) has investigated the technologies required for skin heating but not yet found a method they deem practical. At present their customers rely on methods such as immersion in hot water or wrapping in electric blankets to temporarily raise the body temperature of the dolls. That’s a Real Doll number 6 body with a number 9 face pictured above, in case you were wondering.

Research on Robot Skins with Embedded Sensors

The most useful type of skin, needed by both conventional robots as well as androids, is skin that can sense the environment. There are a number of approaches for creating skins with sensors. The Someya Lab at the University of Tokyo is researching methods of manufacturing flexible skin with an integrated matrix of organic transistors. They’ve created a prototype robot hand covered in the sensors. Their material has a mobility of 1.4 cm2/V·s and can function even when wrapped around a cylinder with a 2mm radius. At present the material is not being produced in commercial quantities. More details can be found in the paper, A large-area, flexible pressure sensor matrix with organic field-effect transistors for artificial skin applications (PDF format).

Another approach, taken by researchers at the Mesoscale Engineering Lab of University of Nebraska, uses metal and semi-conducting nano-particles that self-assemble into a thin-film device that generates electroluminescences in proportion to stress. A flexible CCD reads the light emissions, resulting in both lateral and height resolution of texture that is comparable to the resolution of human touch sensitivity on the finger tip at a pressure of 10 kilopascals. Or to translate that into English, this sensor and can touch a penny and generate a legible impression of the engravings on surface of the copper. At present this material is not being produced commercially. For all the technical details see the paper, High-Resolution Thin-Film Device to Sense Texture by Touch (PDF format).

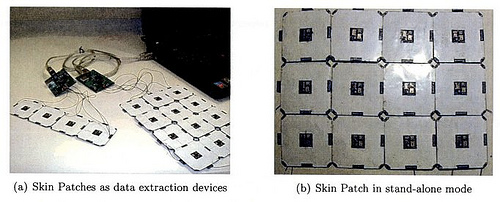

Gerardo Barroeta Perez, while at the MIT Media Lab, proposed an artificial sensate skin he called SNAKE (Sensor Network Array Kapton Embedded). SNAKE was an array of dynamically reconfigurable sensor nodes which could sense strain, bending, proximity, pressure, light, temperature, and even audio. Each node also included i2c bus connections and processing capabilities to extract higher order features such as shadow forms and pressure gradients. Prototype sensor nodes were built but like other projects, this one never reach commercial availability. You can read about it great detail in Perez’s 211 page thesis, S.N.A.K.E.: A Dynamically Reconfigurable Artificial Sensate Skin (PDF format).

Practical Robot Skin

While the previously mentioned research prototypes of robot skin all sound pretty nifty, they aren’t much help if you’re working on a commercial product today. But there are some commercially viable robot skins available if you’re willing to settle for limited resolution and capabilities. For example, researchers from Tokai University demonstrated an easy to manufacture skin for robot pets using easily available materials that included an array of off the shelf shock sensors, a layer of metal foil, and rubber skin. This inexpensive skin was able to distinguish four typical ways humans have of touching pets including a “tickle”, “rub”, “scratch”, and “stroke”. A short technical explanation is available in the paper, Stimulus Distinction in the Skin of a Robot Using Tactile and Shock Sensors (PDF format).

Capacitive Touch sensors were used on the Pleo robot to give a primitive sense of touch through the rubber skin. The engineers reported problems overcome interference caused by having a movable, flexible rubber skin in between the capacitive sensor and the objects it’s supposed to sense. However, they did make it work and it’s a very inexpensive solution. The technical and engineering details are proprietary but you can get a rough overview from the HowStuffWorks page, Pleo’s Sensory System. PlanetAnalog offers a general tutorial on constructing capacitive touch sensors with off-the-shelf parts and the associated electronics needed. Capacitive touch sensor are commonly used on laptops, cell phones, and PDA, so small sensors can be obtained from a wide range of suppliers as well as in lessor quantities from electronic surplus dealers.

Resistive sensors offer similar off-the-shelf possibility for crude touch and pressure sensing. Smaller sensors can be arranged in arrays as needed and are available from industrial electronics suppliers as well as hobby suppliers such as Trossen Robotics.

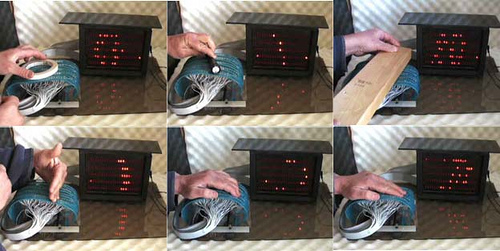

A common trick among electronics hobbyists is using an LED as both a light source and a sensor. By using inexpensive, off-the-shelf LED arrays, one can construct a reflective touch sensor. Unless you’re going for Rudy Rucker’s flicker-cladding approach to robot skins, having a robot covered in bright flickering lights might be a bit annoying. For an example see Jeff Han’s web page on LED touch sensors, which includes the video demonstration, as seen above.

Another hopeful method having at least a crude touch sense with currently available technologies is the use of Flex-actuated bistable domes on a flexible circuitry laminate. Think back to those old calculators that used flexible circuits covered with little bubbles that were depressed by the pushbuttons. Now imagine an arrary of many smaller on/off bubbles covering a large flexible sheet. This method seems pretty straightforward but may not be commonly used because it’s covered by a patent with the associated costs involved in patent royalties. To see an example of this technique in use, see the patent-holder’s website, bistabledome.org.

Other approaches not mentioned in detail here include the use of arrays of capacitor elements, conductive polymer composite films, and a variety of nanomaterials such as aligned carbon nanotubes and nanocomposites. I’ve probably left out others. If you know of any I’ve missed, feel free to post a comment, with links if possible.

More Research Needed

As you can see, more research is needed to find a practical and cost effective way of manufacturing robot and android skins. It’s interesting to note that this is on the agenda for both EU and US robot research. The recently released Roadmap for US Robotics Research specifically mentions the need for sensor skins for use in prosthetics as a 15 year goal. Such skin could easily be adapted for other robotics uses. The EU, meanwhile, have created a research project called Roboskin whose goals include developing a practical skin capable of large area tactile feedback as well as developing cognitive architectures for integrating data from the sensor skins.

To sum it all up, and actually answer Ben’s question, there are a lot of promising robot skin technologies under development but nothing like a large scale sensor skin you can purchase and wrap your robot in today. However, there are several inexpensive, off-the-shelf technologies that can be used right now to create a workable robot skin, some of which have been successfully used in commercial robots already.

May Miscellany

Time for a quick update. May started off with the VEX Robotics World Championship here in Dallas. I was one of the judges evaluating the 270 teams and their robots. I’ll probably write a little more about it in an upcoming issue of Robot Magazine for those who are interested.

I created a robots.net twitter feed and robots.net facebook page for robots.net this month. So far the facebook page is ahead with over 160 fans while the twitter feed only has about 38 followers so far. To be fair the facebook page went online a couple of weeks earlier so we’ll see if it hangs on to the lead over time.

I’m still struggling to find time to devote to mod_virgule but squeezed in a few more hours of C coding on the new HTML parser. It’s now running on a test server with a subset of Advogato’s database. So far, so good. Blog aggregation and parsing seems to be working, as do local blog posting, article posting, and article comments. The magnitude of the changes makes this update a bit of scarier than usual for robots.net and Advogato. If nothing breaks in the next week or so of testing, though, I’ll cross my fingers and make it live.

I continue to drag my Canon 40D around with me everywhere and since my last blog post, I’ve shot photos of the Funky Finds Spring Fling craft show in Ft. Worth, the Aveda Walk for Water event in Dallas, the aforementioned VEX Robotics World Championship, the Cottonwood Arts Festival in Richardson, the 2009 DFW Dragon Boat Festival in Las Colinas, oh, and a few pics of my friends at Vivanti Group in Deep Ellum. In the retro-photo department, I posted some BW 127 photos shot with a Kodak Brownie Reflex Synchro. Yesterday, a package arrived containing that rarest of things, color 127 film, from a small manufacturer in Canada. I’ll probably run a roll through the Bencini Comet S sometime soon.

2009 VEX Robotics World Championship

It all started when Tom Atwood of Robot Magazine asked me to attend the VEX Robotics World Championship to shoot photos of the more than 270 teams from around the world competing in the event. Before I even knew what happened, I found myself enlisted as one of the judges for the event. Judging the small contests held by local robot groups can be a lot of work but it pales in comparison to the efforts needed for something like the VEX championship. Read on for the full story.

The judging panel itself varied between 10 and 15 indivduals over the

course of the three day event. In addition to the judges, there were

dozens of others acting as referees, score keepers, and doing data entry

to feed information to the judges. At any given moment there were

usually 16 or more teams involved in at least four matches. The scores

only form part of the input for the judging. Several of the judges spent

their entire days in private meetings with each team to evaluate their

engineering notebooks and robots. Other judges, including myself, spent

their days wandering through the pit area, talking to members of each

team, asking questions about their robot, team structure, engineering

approach, and other questions.

By the time a team was recommend for an award, they had often been interviewed by multiple groups of judges several times. Even so, I had my doubts going in that this subjective approach could really pick the best candidates. My doubts were dispelled as the scores from the matches began rolling and we frequently saw the same teams who stood out in the subject analysis of the pit judges climbing in the competition scores as well. What this meant to me is that the teams with good communications, well defined engineering strategies, and good ideas also tended to build winning robots.

But enough about the judging, what was the VEX Championship like? Blue hair and pirate costumes seemed to be the most popular fashions among teams. But there were also plenty of mohawks, fauxhawks, and colorful regional garb from around the world. I was pleasantly surprised to see so many girls on the teams. Engineering and robotics is no longer a male-only field.

Each match consists of four teams playing in pairs. Two blue teams work together against two red teams. The robot must acquire red or blue foam blocks and deposit them in one of three types of containers to gain points. Unlike many high-school level events, the VEX contest is not just another remote-control vehicle contest. While the majority of each match is spent with the robots in teleoperated mode, the contest emphasizes autonomy as a goal of building robots. The robots must operate autonomously for a portion of time at the beginning each match. Robots that are capable of scoring autonomously give the teams a much better chance of winning. There is also a college level contest in which only autonomous action is allowed but the vast majority of time is spent with the middle and high school level matches.

The first day of the event is spent in practice and preparation. Each team must have their robot inspected by officials to verify that it meets the rules. Teams then spend most of their time running the robots on the test fields in test matches as they fine tune the operation and work out bugs. The next two days are spent in elimination matches and eventually playoffs to find the winners. There are also various breaks in the matches to present awards.

If you like to see more of the event, don’t worry, I shot more photos that you could possibly ever want and posted about half of them on flickr, so go ahead and have a look at the VEX Robotics World Championship photo gallery.